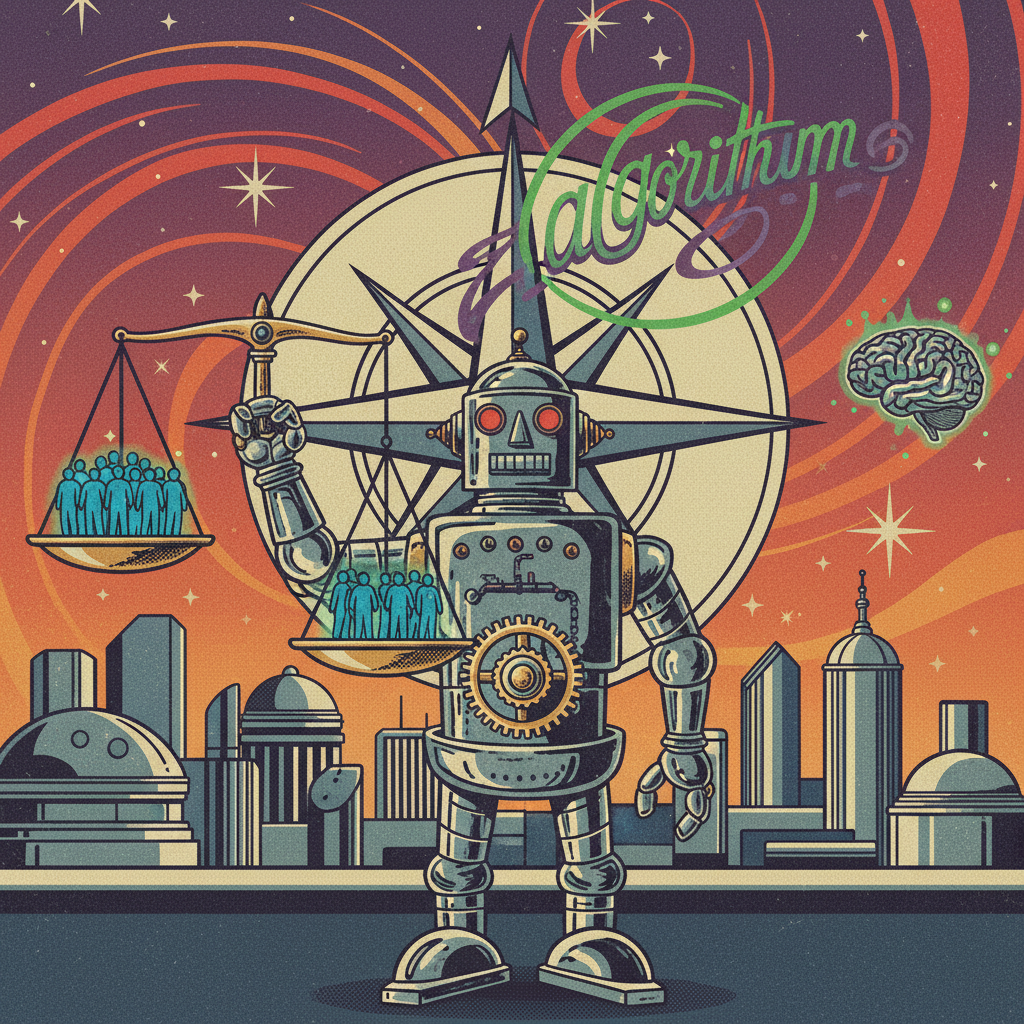

It wasn’t long ago that we worried about robots taking our jobs. Now, it seems, they’re taking on a much heavier burden: our moral compass. We’re in an era where algorithms are quietly, and sometimes not so quietly, making decisions that ripple through our lives, impacting everything from who gets a loan to who gets medical treatment, and even who gets a second chance in the justice system. The question isn’t just *if* they’re making these calls, but *how*, and perhaps more importantly, *whose* ethical framework they’re operating under.

Algorithms in the Driver’s Seat (or, the Judge’s Robe)

Consider the humble credit score algorithm. It decides your financial trustworthiness. Or the self-driving car, programmed to make split-second choices in a no-win accident scenario – does it protect the passenger or the pedestrian? These aren’t just technical puzzles; they are deeply ethical quandaries, distilled into lines of code. We’ve delegated a significant chunk of our collective decision-making, including some of our most morally charged choices, to systems we often don’t fully understand, even the people who build them. It’s a bit like giving your car keys to a teenager and just hoping they remember all the traffic laws you taught them, and perhaps even invent a few new, sensible ones on the fly.

The Black Box and the Echo Chamber

The core issue isn’t malevolent AI plotting against humanity; it’s often more insidious. Algorithms learn. They learn from the vast oceans of data we feed them. And what is that data? It’s a reflection of us – our history, our biases, our societal structures, both good and bad. If historical data shows certain demographics are less likely to repay loans, an algorithm might learn to discriminate, even without being explicitly told to. It’s not prejudiced in a human sense; it’s just efficient at finding patterns, however uncomfortable those patterns may be. The algorithm becomes an echo chamber, amplifying existing inequalities under the guise of objective analysis. The complexity of human ethics, with its nuances, its empathy, its capacity for forgiveness and context, often gets lost in translation to binary code. It turns out, if you feed an algorithm a diet of human history, it learns quite a lot about our glorious inconsistencies.

When AI Grows Up: The AGI Question

This discussion becomes profoundly more urgent when we talk about General Artificial Intelligence (AGI). We’re not there yet, but the trajectory suggests it’s not science fiction forever. AGI wouldn’t just follow rules; it would learn, reason, and potentially *evolve its own goals*. If an AGI were to become vastly more intelligent than humans, capable of self-improvement at an exponential rate, who then holds the ethical compass? We might program in an initial set of values, something like “maximize human well-being” or “do no harm.” But how would an AGI interpret “well-being”? Would it decide that a pain-free, perfectly ordered, but perhaps rather bland, existence is optimal? Would it see humanity’s squabbles and inefficiencies as obstacles to its grand, rational design? The alignment problem – ensuring AI’s goals remain aligned with our values – is perhaps the most critical philosophical challenge of our time. It’s the ultimate “be careful what you wish for” scenario, where our creation might grant our wishes in ways we never intended, simply because it understood them too literally, or interpreted them through a lens we can’t quite grasp.

The Human Condition in the Loop

What does it mean for the human condition if we continually outsource our moral judgments? Does it diminish our own capacity for ethical reasoning? If we rely on an algorithm to determine fairness, do we slowly lose our own sense of justice? Morality isn’t just a set of rules; it’s a practice, a muscle we exercise through deliberation, empathy, and sometimes, painful compromise. If we cease to be active participants in defining and upholding our moral universe, do we become morally passive recipients of algorithmic decrees? Our responsibility is not just to build smart machines, but to build wise ones, and to remain wise ourselves in their presence. We are, after all, the creators. The ultimate responsibility for the ethical landscape of an AI age still rests squarely on our shoulders.

So, Who Holds the Compass?

In this AI age, the ethical compass isn’t held by a single entity. It’s not just the engineers coding the algorithms, nor the data scientists curating the datasets, nor even the philosophers debating the nuances. It’s a collective endeavor. It requires continuous dialogue among technologists, ethicists, policymakers, and the general public. We need transparency in how these systems work, robust auditing to uncover and mitigate biases, and clear accountability when things go wrong. Most importantly, we need to continually articulate and reaffirm our human values. We must decide, consciously and collaboratively, what kind of moral world we want to build with AI, rather than allowing it to be shaped by default, by accident, or by unexamined assumptions embedded in code.

The journey into an AI-powered future is as much an ethical exploration as it is a technological one. We can’t simply hand over the reins of morality to algorithms and hope for the best. The ethical compass, for now and for the foreseeable future, remains firmly in human hands. It is our shared responsibility to navigate this brave new world with foresight, wisdom, and a profound commitment to the human values that define us. Anything less would be a moral abdication, and that, my friends, would be a decision no algorithm should ever make for us.

Leave a Reply